As AI accelerates along an exponential curve of features and intelligence, there are hidden risks and opportunities that require active governance strong enough deliver transparency, but also robustly adaptable to emerging regulations and unintended consequences. Domain and industry leaders are facing unfamiliar challenges as the rush for scalable results breaks proven deployment and oversight solutions.

Prior to 2023, the lure of artificial intelligence (AI) was researched in universities but limited in its progression due to scalability, performance, and capability. What a difference 15 months has made on our business models and what must be considered for the future:

- Today and looking into any part of the future, AI innovations are seemingly limitless, unbounded digital ecosystems, and layers upon layers of intelligent features that seemed impossible just a budget cycle back (e.g., Sora).

- Today in a pervasive age of AI, business solutions discussing how to capitalize on innovation and data advancements dominate IT, marketing, and even traditional enclaves such as audit, tax, legal, and boardrooms.

- Today we scan the headlines, seek out needed skill sets, and have interdepartmental communications and we banty phrases such as cloud computing, neural networks, federated learning, and even next-horizon solutions that include large action modules (LAM’s, e.g., Rabbit 1.0).

Yet, beyond these innovative, singular ideas how, who, and where are the intersections points, the active management, and regulatory oversight? For the last 16 months, Gen AI has reworked traditional practices and spurred innovation, but it represents the tip-of-the-spear. To come to grips with the AI hyper-innovation cycle now underway, industry leaders must look beyond vendor solutions.

The future of tomorrow requires a proactive integration of innovative research tempered by domain market forces, consumer behaviors, AI technology (e.g., chips and software) and digital data explosions all glued together by security, legal, and regulatory requirements. It is a future that demands layers of integrated solutions all requiring transparency, heterogeneity, and risk-attributions. Herein is the core business challenge: how will all the pieces fit—and at what costs?

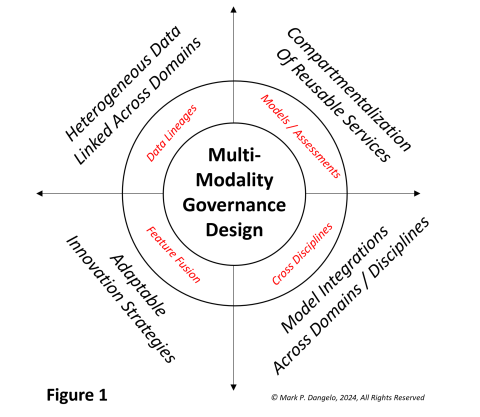

At its core, AI is a data-driven solution. At its edges, AI represents an ability to extend data ideation using building blocks of functionality uniquely assembled—but how? Let’s discuss an illustrative representation of delivering AI Governance by design versus the traditional siloed product mindsets of “one-and-done.”

What Figure 1 represents is that organizations will be required to cost-effectively blend results (i.e., vendor solutions) with research (i.e., deep-channel innovations) to arrive at a federated model that adapts to changing technology, business, and regulators.

While this idea is not completely foreign, it blurs the lines between what is coming and what is possible (i.e., research), with what is available and how can it be adopted (i.e., vendor and outsourcing solutions).

At a macro level, when the disciplines of legal, regulatory compliance, or risk management think of solutions—data, technology, organizational—they traditionally look at siloed “threads” of capabilities, which include headlines surrounding generative AI, data repositories, or process automation. It is a singular approach to incremental efficiencies or inclusion of additional insight, but therein is the fatal mistake. It requires asking fundamental business and architectural questions and assessments, which are taught to first year business students—what, how, why, where, and who.

The complexities of AI, its vast ability to disintermediate processes, and its data foundation require strategies and architectures that weave together rapidly evolving technologies all impacting organizational change and the skills within. What is the approach, design, or outcome? From business leaders, what will it cost versus the risks of non-compliance?

Around these to questions comes a set of emerging designs to actively managed federated (i.e., multimodality) products and capabilities linked across a fabric of solutions that are analogous to using Lego’s (based on traditional architectural designs—Zachman, updated with compartmentalized meshes—Dehghani).

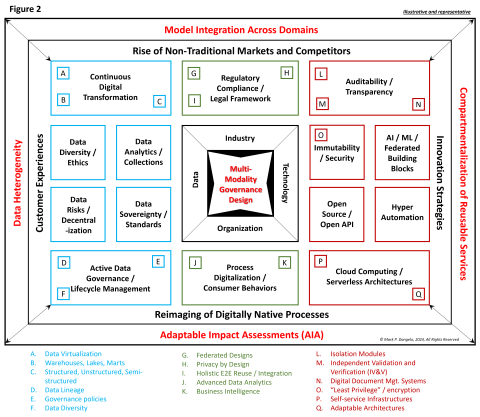

Figure 2 represents a comprehensive blueprint to actively address the demands of AI governance encased within result-oriented, business demanded delivery. It indeed is a picture representation worth 10,000 words and numerous upskilling demands.

When studying the shock of Figure 2, business leaders see complexity and divisional challenges. For larger firms across highly regulated industries (e.g., BFSI), these designs are already being discussed across their multibillion dollar budgets and thousands of IT staff. For medium and smaller firms, designing active AI governance against a blueprint of design seems impossible at first glance.

Yet, development of these blueprints or fabrics has already been done. Looking across industries and disciplines we can leverage examples from privacy-by-design solutions, outsourcers seeking to modernize their products for consumers, or brand name consulting leaders who are proactively not just engaging their customers, but assembling solutions that meet future needs.

For researchers in industry and academics, their deep understanding of each unique area provides the roadmaps for adaptation in the face of hyperscale and rapid-cycle technologies. This is where corporate leaders driven by results must balance what is available with what is possible when the innovation cycles for AI advancements are now measured in weeks and months—not years.

In the end, innovative advancements driven by AI’s rapid-cycle developments represent a step-function shift where the adoption of one-off technology by itself will lead to unsustainable results or cascading impacts that negatively impact downstream operations. As an example, Gen AI is new to the markets. What will future releases do to the operating methods assembled for staff to interact and perform their duties? How fast can controls, skills, and layers of features be assembled and disassembled?

These AI realities, coupled with regulators and their exploding oversight legislations—EU AI, US Executive actions, state AI ethical regulations, Digital Services Act, US AI Environmental Impact Act, FCC AI Act, US Investment Act—demand more than the traditional adoption of siloed regulatory technology (RegTech) to create governance solutions. The roadmap of tomorrow’s AI governance demands a blending of results versus research.

Only with a holistic approach to AI governance will enterprises and their researchers arrive at a workable and efficient solution to regulation. Regulation is the glue that demands integration. Integration is demanded by fragmented solutions. And solutions ensure that the enterprise can be profitable in the face of opaque and new market forces. Linking them all together will be unfamiliar—but it is the solution that cannot be left to chance.

In the end, AI governance is about design—not just products, technology, data or even regulators.